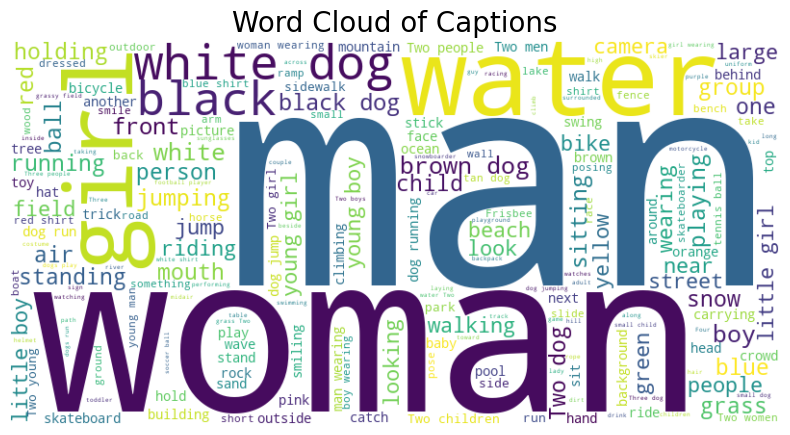

Word Cloud

Below is a visualization of the most popular words from the caption dataset. Notably, “Man” and “Woman” emerge as the most frequently mentioned words within the captions.

Caption Model Architecture

Below is the code for defining and compiling a neural network model designed for image captioning. The model combines image and text features, processes them through LSTM layers, and generates captions.

from keras.models import Model

from keras.layers import Input, Dense, Reshape, Embedding, LSTM, Dropout, add, concatenate

from keras.utils import plot_model

# Define input layers

input1 = Input(shape=(1920,))

input2 = Input(shape=(max_length,))

# Image feature layers

img_features = Dense(256, activation='relu')(input1)

img_features_reshaped = Reshape((1, 256), input_shape=(256,))(img_features)

# Text feature layers

sentence_features = Embedding(vocab_size, 256, mask_zero=False)(input2)

merged = concatenate([img_features_reshaped, sentence_features], axis=1)

sentence_features = LSTM(256)(merged)

x = Dropout(0.5)(sentence_features)

x = add([x, img_features])

x = Dense(128, activation='relu')(x)

x = Dropout(0.5)(x)

output = Dense(vocab_size, activation='softmax')(x)

# Compile the model

caption_model = Model(inputs=[input1, input2], outputs=output)

caption_model.compile(loss='categorical_crossentropy', optimizer='adam')Model Visualization

# Plot model architecture

plot_model(caption_model, to_file='model_plot.png', show_shapes=True, show_layer_names=True)

# Model summary

caption_model.summary()Data Generators

The following code sets up custom data generators for training and validation. These generators load image-caption pairs from the dataset and prepare batches for model training.

train_generator = CustomDataGenerator(

df=train,

X_col='image',

y_col='caption',

batch_size=64,

directory=image_path,

tokenizer=tokenizer,

vocab_size=vocab_size,

max_length=max_length,

features=features

)

validation_generator = CustomDataGenerator(

df=test,

X_col='image',

y_col='caption',

batch_size=64,

directory=image_path,

tokenizer=tokenizer,

vocab_size=vocab_size,

max_length=max_length,

features=features

)Stay tuned for next week where we will finish the model!